Why Employees Ignore Expensive AI Tools at Work

A familiar pattern is starting to show up in AI rollouts.

A business owner spends thousands of dollars setting up an internal AI system. Maybe it is a chatbot. Maybe it is a knowledge assistant. Maybe it is a workflow tool with a cleaner interface than the old process.

Nobody used it.

The system is live. The money is spent. Leadership believes it can help. But employees keep doing their work the old way until usage becomes mandatory and starts showing up in performance tracking.

That is a rough way to learn an expensive lesson: buying AI is much easier than changing how people work.

I do not think that is a healthy way to roll out AI. If a tool only gets used after leadership ties it to performance scores, that is not proof of product-market fit inside the company. It is a signal that the original rollout missed the real workflow.

The Tool Is Rarely the Whole Problem

When leaders talk about failed AI adoption, they often frame it as employee resistance: people are scared of replacement, too busy to learn, or too attached to the old process.

Sometimes that is true, but I think it is too easy.

Gartner found something more interesting in a 2025 employee survey: many employees who can use AI still do not use it because their coworkers are not using it either. Gartner's read was that the root issue is often rushed implementation with too little attention paid to workforce impact.

That sounds right. Most employees are not sitting around hoping the company wastes money. They are responding to incentives, habits, risk, and pressure.

If the old workflow is still accepted, the new AI tool is optional.

If the AI output is not trusted, the employee has to double-check everything.

If nobody knows when the tool should be used, people will avoid the extra step.

If managers do not model the behavior, the tool feels like another executive experiment.

That is not a software problem. That is an adoption design problem.

Implementation Is Not Adoption

There is a quiet gap between "we rolled out AI" and "AI changed the way work gets done."

Gallup's 2026 AI indicator shows this clearly. As of February 2026, 41% of U.S. employees said their organization had integrated AI tools, but only 28% used AI a few times a week or more. Gallup called this an implementation-adoption lag: implementation does not guarantee use.

That gap matters because leaders can easily mistake deployment for progress.

Licenses are active, training happened, and the dashboard looks alive. None of that proves the work changed.

If the answer is no, the AI system is probably sitting beside the workflow instead of inside it.

Mandatory Usage Is A Warning Sign

I understand the frustration. If a company has already paid for a system and nobody is using it, leadership will start looking for levers. Performance measurement is an obvious lever.

But that should be a last-resort warning sign, not the default adoption strategy.

You can get people to open the tool. You can get them to paste prompts. You can get them to include an AI step in a checklist. But if it turns a 12-minute policy lookup into another review step, mandatory usage becomes theater. If it turns that same lookup into a 30-second answer people trust, they will notice.

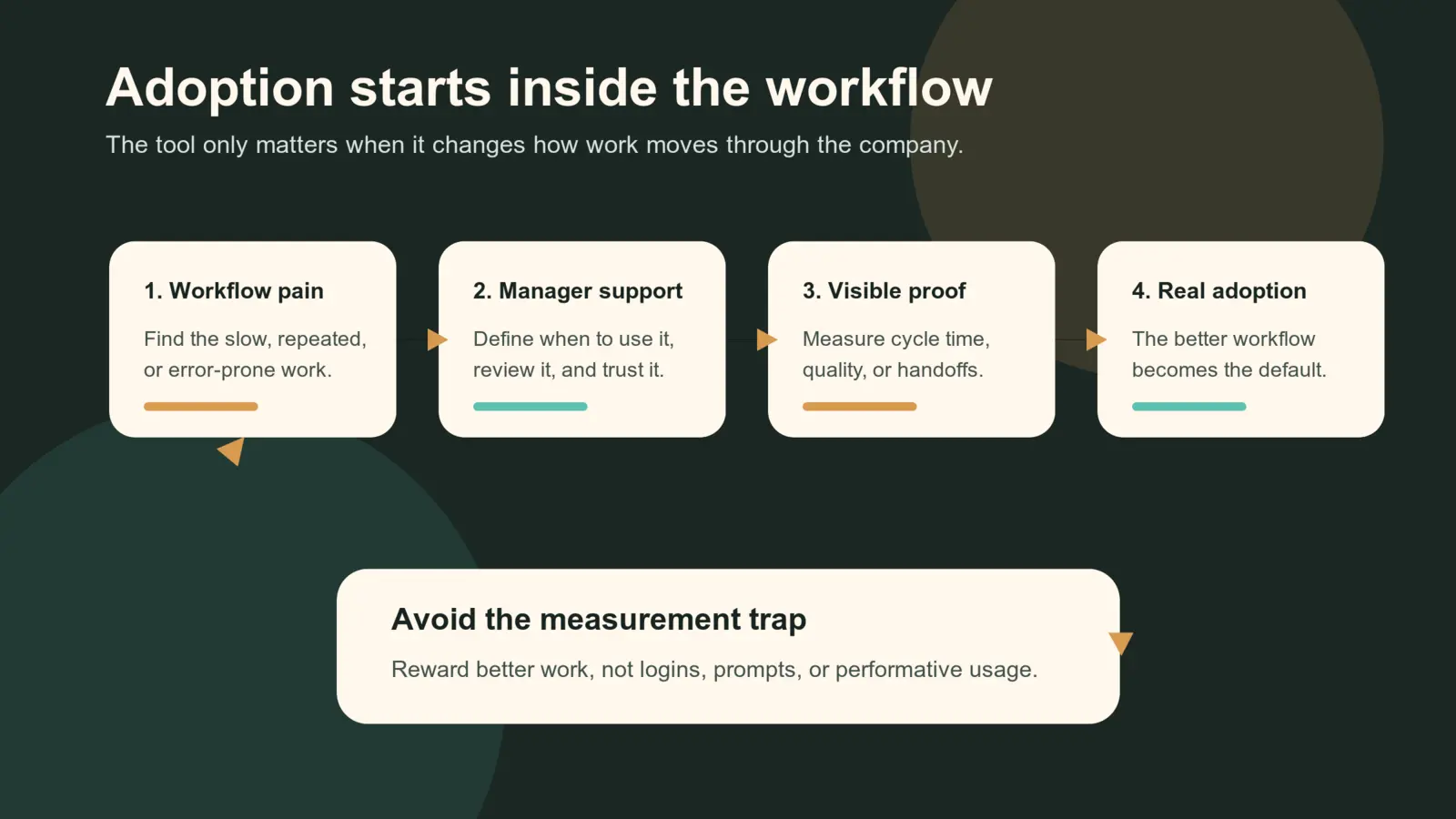

This is the measurement trap. If the company rewards tool usage instead of better work, employees will optimize for the metric. Leaders might see more prompts, more logins, and more AI-assisted outputs while the actual business process stays the same.

That is why I would be careful about making AI usage itself part of performance measurement. It can create compliance without trust. It can also punish employees for noticing that the tool is slow, risky, or poorly matched to the job.

Measure the work instead: cycle time, rework, customer response time, handoff clarity, error rate, or decision quality. If those do not improve, more tool activity is just activity.

The real question is not "how do we force employees to use this?"

It is "what part of the job becomes obviously better when this tool is used?"

That is where many AI rollouts get thin. The business buys a general-purpose system, then expects employees to discover the use cases on top of their existing work.

Some will. Most will not.

Start With Workflow Pain, Not AI Ambition

The companies that get adoption right usually do not start with "we need AI."

They start with a broken or expensive part of work:

- Customer questions take too long to triage.

- Sales notes never make it into the CRM cleanly.

- Support agents rewrite the same responses every day.

- QA teams spend too much time summarizing failures.

- Managers cannot find the latest policy or client context.

Then they ask: can AI remove friction from this specific workflow?

That is a much better starting point than buying a central chatbot and hoping people find a reason to care.

The difference is ownership. A generic AI tool belongs to nobody. A workflow improvement belongs to a team with a problem.

The second difference is subtraction. If AI takes over part of the work, something old should get smaller or disappear. Otherwise the company has not improved the workflow. It has added another step.

Managers Matter More Than The Announcement

Gallup's data also points to the role managers play. In one 2026 survey, frequent AI use was much higher when employees said tools were integrated into workflows, managers actively supported use, experimentation was encouraged, and policies were clear. Employees with strong manager support reported frequent use at 78%, compared with 44% without it.

Microsoft's 2026 Work Trend Index points in the same direction: organizational factors such as culture, manager support, and talent practices accounted for more than twice the reported AI impact of individual effort.

That tracks with real workplace behavior.

Employees take cues from the people who evaluate them. If managers do not know how the tool fits into the work, employees will not take the risk of changing their process. If managers use AI casually but never clarify standards, employees may worry about quality, privacy, or whether AI use will be judged later.

This is why AI adoption cannot live only with the CEO, IT, or an innovation team. Line managers need to translate AI into local work:

- When should we use it?

- What output is acceptable?

- What still needs human review?

- What data should never go into the tool?

- What does good usage look like in this team?

Without that layer, adoption becomes vague. Vague tools do not become habits.

The Practical Takeaway

I do not think CEOs should stop investing in AI. That would be the wrong lesson.

The better lesson is that AI spend should follow workflow clarity. Before buying or building an AI system, leadership should be able to answer five questions:

| Question | Why it matters |

|---|---|

| What workflow is this changing? | Prevents the tool from becoming a side quest. |

| Who owns adoption after launch? | Keeps AI from becoming an IT-only project. |

| What will employees stop doing? | Creates room for the new behavior. |

| How will managers reinforce usage? | Turns adoption into team practice. |

| What evidence proves value? | Separates real impact from login activity. |

If those answers are weak, the company is not ready for a big rollout. Start smaller. Pick one team. Pick one painful workflow. Measure whether the work actually improves.

AI adoption is not about convincing employees to admire the technology. Adoption starts when the old way becomes the annoying way.

Sources: Gartner on employee AI adoption, Gallup's 2026 AI workplace indicator, Gallup on manager support and AI adoption, and Microsoft's 2026 Work Trend Index.

Tagged